Global Optimization (GO)

A globally optimal solution is one where there are no other feasible solutions with better objective function values. A locally optimal solution is one where there are no other feasible solutions "in the vicinity" with better objective function values. You can picture this as a point at the top of a "peak" or at the bottom of a "valley" which may be formed by the objective function and/or the constraints -- but there may be a higher peak or a deeper valley far away from the current point.

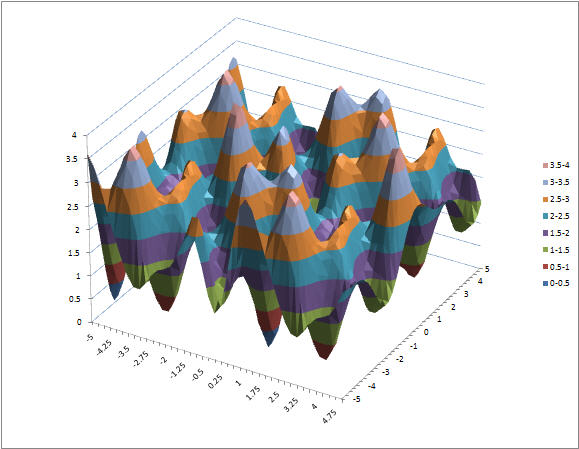

In convex optimization problems, a locally optimal solution is also globally optimal. These include LP problems; QP problems where the objective is positive definite (if minimizing; negative definite if maximizing); and NLP problems where the objective is a convex function (if minimizing; concave if maximizing) and the constraints form a convex set. But many nonlinear problems are non-convex and are likely to have multiple locally optimal solutions, as in the chart below. (Click the chart to see a full-size image.) These problems are intrinsically very difficult to solve; and the time required to solve these problems to increases rapidly with the number of variables and constraints.

GO Methods

Multistart methods are a popular way to seek globally optimal solutions with the aid of a "classical" smooth nonlinear solver (that by itself finds only locally optimal solutions). The basic idea here is to automatically start the nonlinear Solver from randomly selected starting points, reaching different locally optimal solutions, then select the best of these as the proposed globally optimal solution. Multistart methods have a limited guarantee that (given certain assumptions about the problem) they will "converge in probability" to a globally optimal solution. This means that as the number of runs of the nonlinear Solver increases, the probability that the globally optimal solution has been found also increases towards 100%.

Where Multistart methods rely on random sampling of starting points, Continuous Branch and Bound methods are designed to systematically subdivide the feasible region into successively smaller subregions, and find locally optimal solutions in each subregion. The best of the locally optimally solutions is proposed as the globally optimal solution. Continuous Branch and Bound methods have a theoretical guarantee of convergence to the globally optimal solution, but this guarantee usually cannot be realized in a reasonable amount of computing time, for problems of more than a small number of variables. Hence many Continuous Branch and Bound methods also use some kind of random or statistical sampling to improve performance.

Genetic Algorithms, Tabu Search and Scatter Search are designed to find "good" solutions to nonsmooth optimization problems, but they can also be applied to smooth nonlinear problems to seek a globally optimal solution. They are often effective at finding better solutions than a "classic" smooth nonlinear solver alone, but they usually take much more computing time, and they offer no guarantees of convergence, or tests for having reached the globally optimal solution.

<< Back to: Tutorial Start Next: Non-convex GO Problem Technology >